Counting and Radix Sorts

For some element types, we can do sorting that is not based on direct comparisons. In other words, sometimes we can exploit properties of the elements to perform faster sorting. In the following, we consider

counting sort that is applicable for some small element sets, and

radix sorts that exploit the structure of the elements.

Counting sort

Assume that the set of possible elements is, or is easily mappable to, the set \(\Set{0,...,k-1}\) for some reasonably small constant \(k\). For instance, if we wish to sort an array of bytes, then \(k = 256\). Similarly, \(k = 6\) when sorting the students of a course by their grade. The idea in counting sort is to

count the occurrences of each element in the array, and

use the cumulated counts to indicate where each element should be sorted to.

In pseudocode, the algorithm can be expressed as follows.

Example: Counting sort

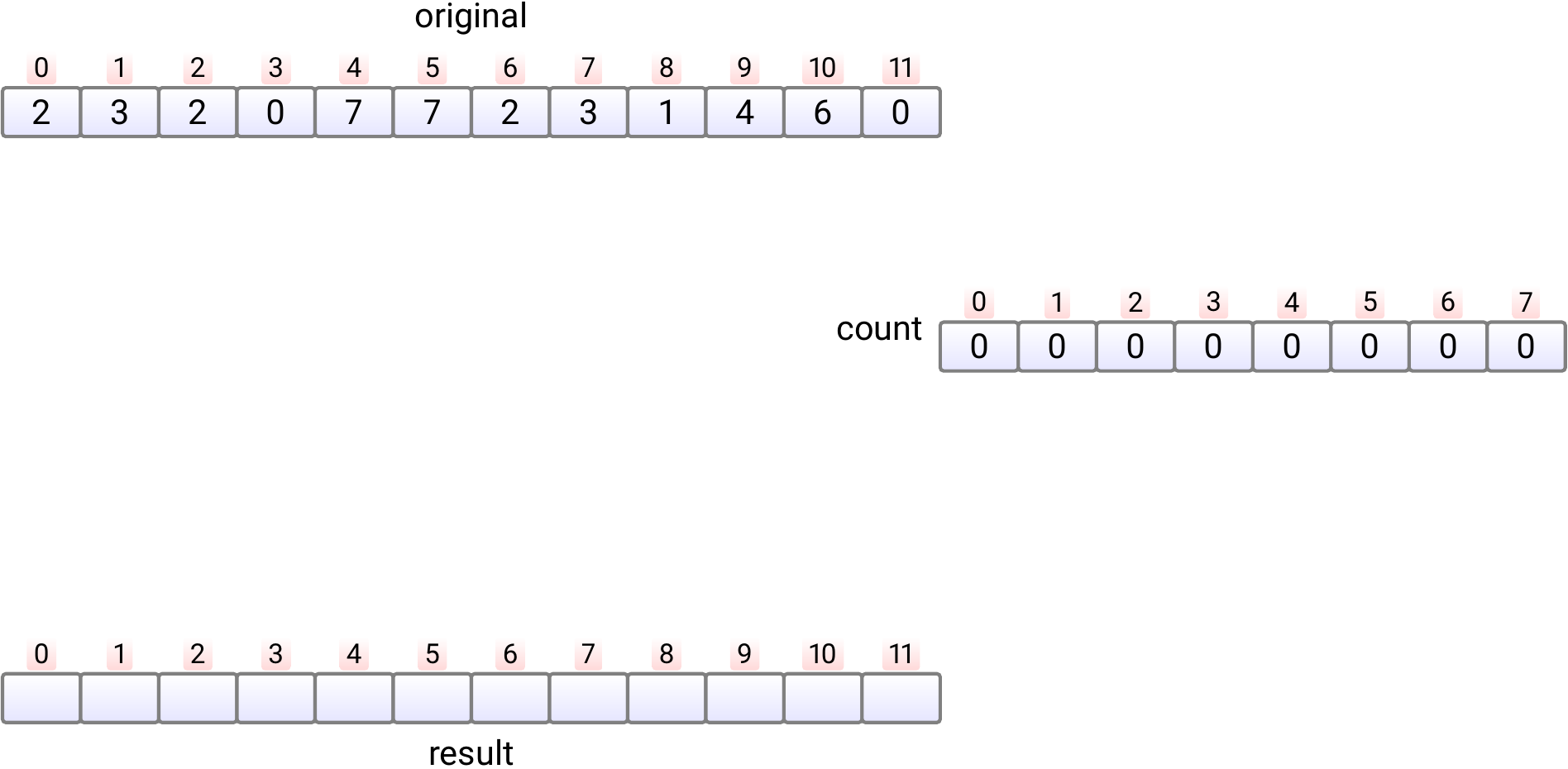

Let’s apply counting sort to an array of octal digits 0,1,…,7.

In phase 1, we allocate the auxiliary

countandresultarrays and initialize thecountarray with 0s. Takes time \(\Theta(k)\) and here \(k = 8\).

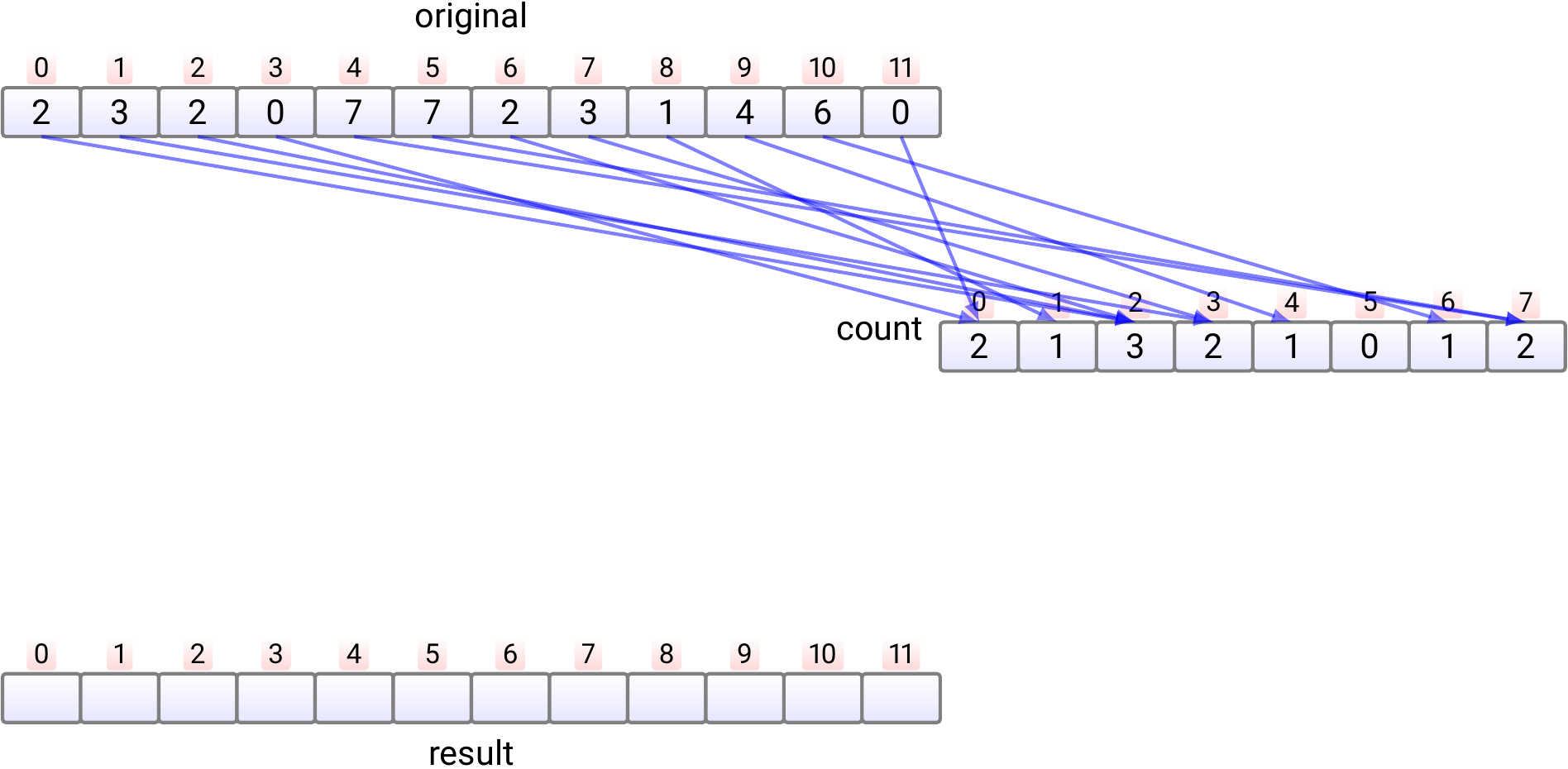

In phase 2, we count, in \(n\) steps, in each position \(count[j]\) how many times the element \(j\) occurs in the input array. This phase take time \(\Theta(n)\).

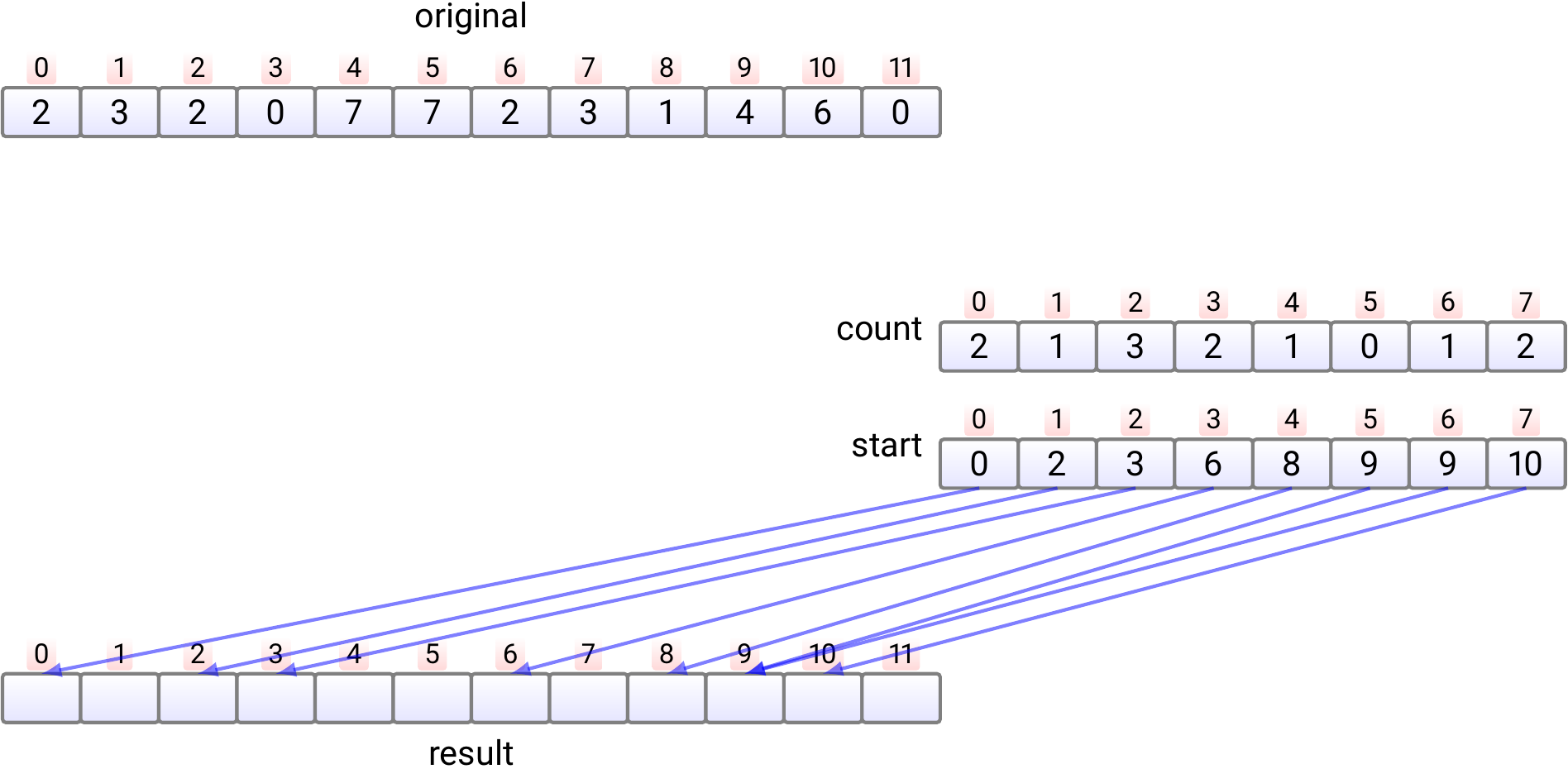

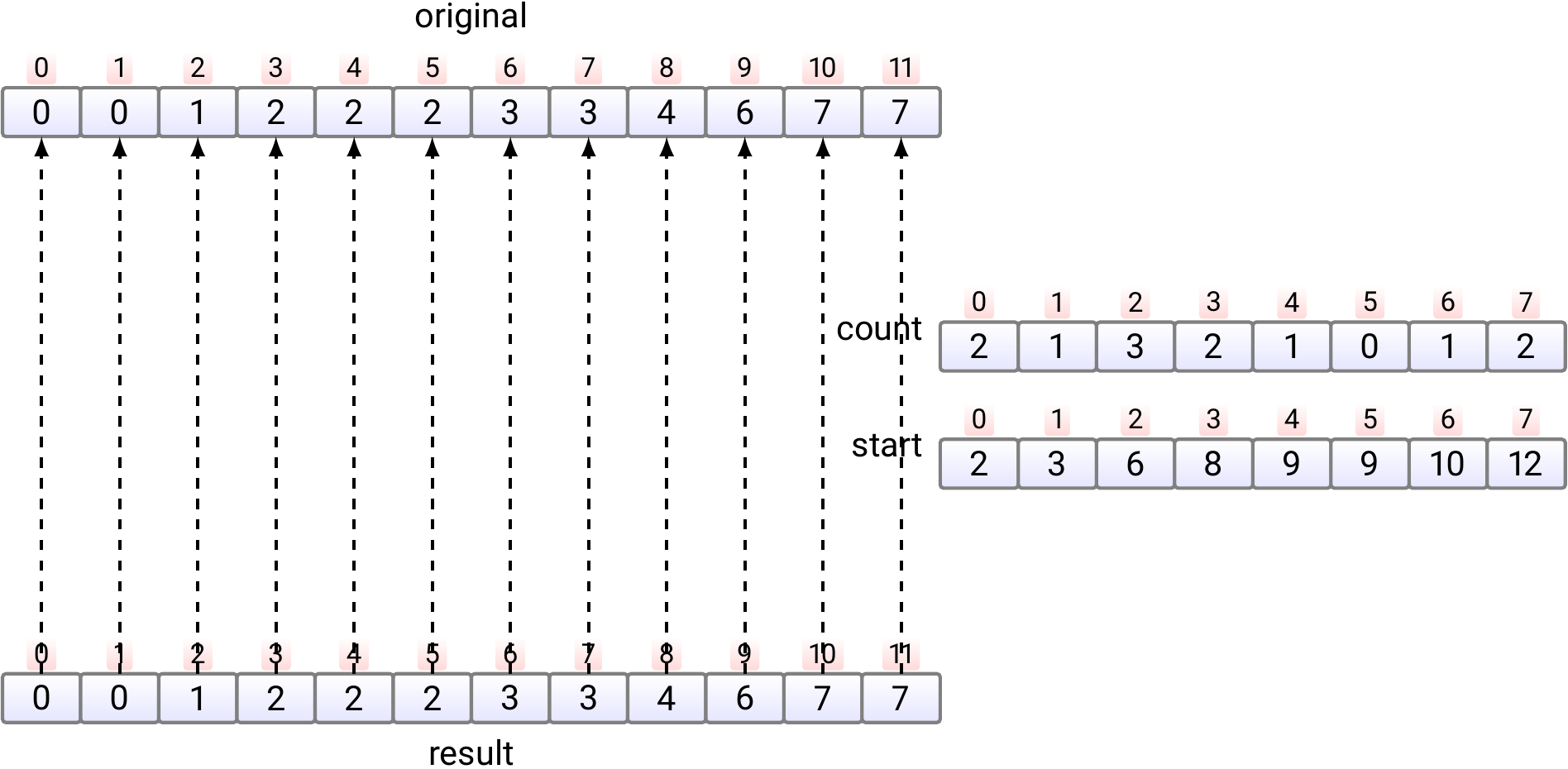

In phase 3,

For each value \(j\), compute \(start[j] = \sum_{l = 0}^{j-1} count[l]\) which tells the position of the first occurrence of the element \(j\) in the sorted

resultarray Takes time \(\Theta(k)\).

Note: as we will not use the

countafter this, we could compute the information of thestartarray directly into it, saving some memory allocation.

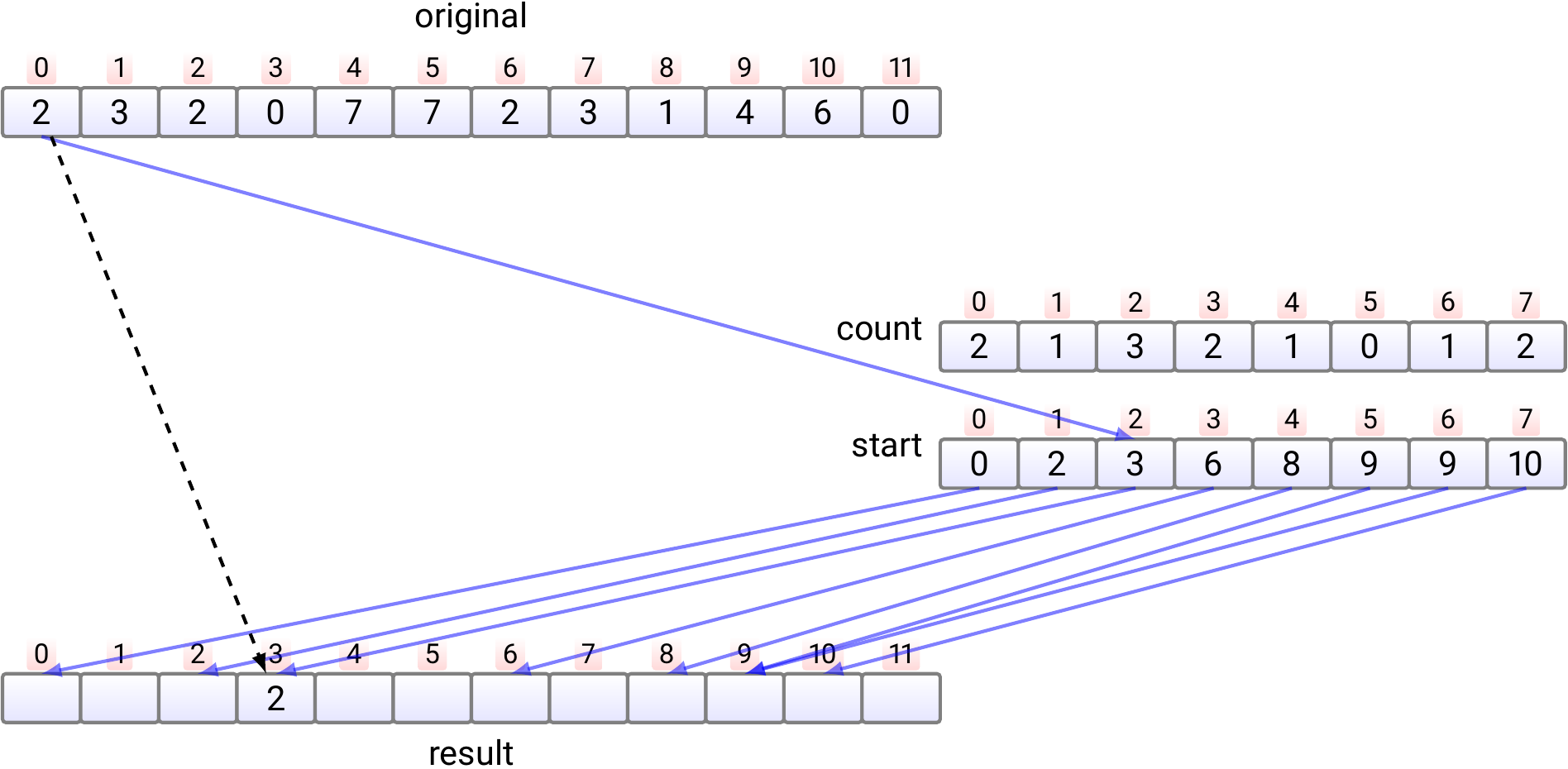

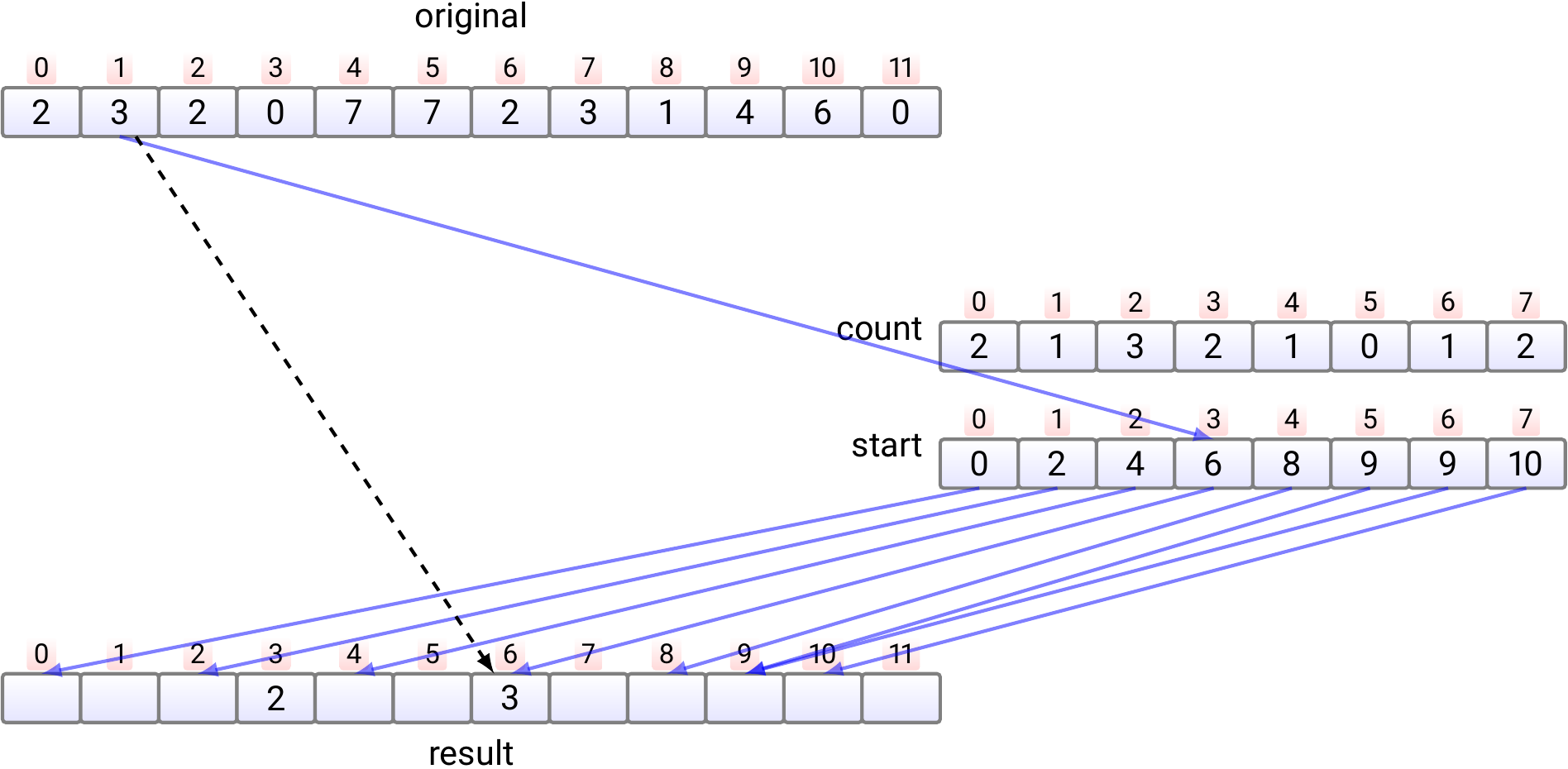

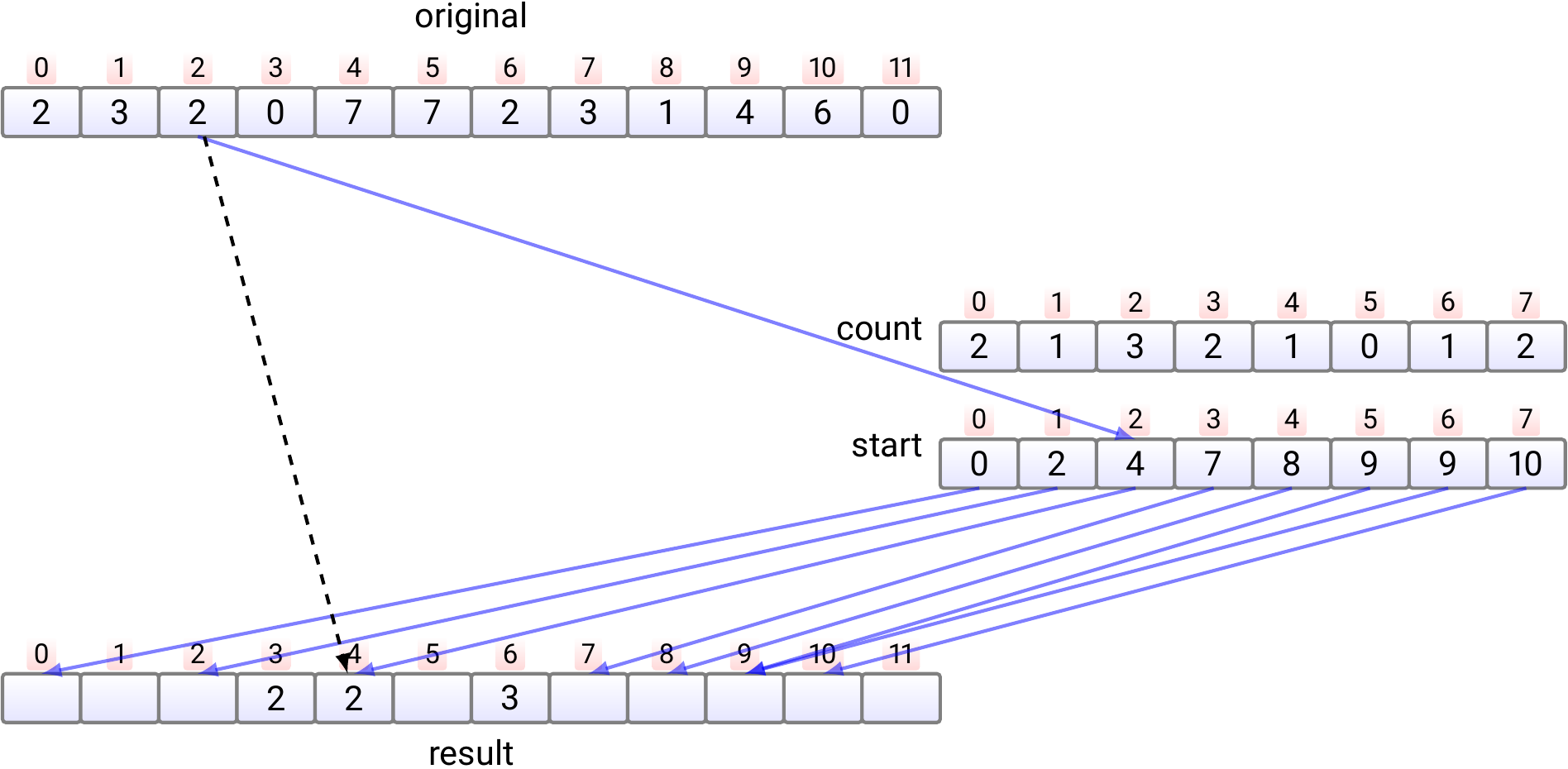

In phase 4, the elements in the original array are moved, one by one, into the correct position in the

resultarray.Copy the element \(e_0\) at index 0 to the index \(start[e_0]\) in the

resultarray. Increment \(start[e_0]\) by one.

Copy the element \(e_1\) at index 1 to the index \(start[e_1]\) in the

resultarray. Increment \(start[e_1]\) by one.

Copy the element \(e_2\) at index 2 to the index \(start[e_2]\) in the

resultarray. Increment \(start[e_2]\) by one.

And so on.

Finally, if needed, copy the sorted array back from the

resultarray to the original array. This phase is not shown in the pseudocode and is not always required.

Properties:

Stable. Why?

Running time is \(\Oh{k + n}\), which is \(\Oh{n}\) as \(k\) is a constant.

The algorithm uses auxiliary arrays of sizes \(n\) and \(k\), and thus does not work “in place” but needs \(\Oh{k + n}\) extra memory.

A very competitive algorithm when \(k\) is small and \(n\) is large.

Most-significant-digit-first radix sort

Assume that

the elements are sequences of digits from \(0,....,k-1\) and

we wish to sort them in lexicographical order.

For instance,

32-bit integers are sequences of length \(4\) of \(8\)-bit bytes, and

ASCII-strings are sequences of ASCII-characters.

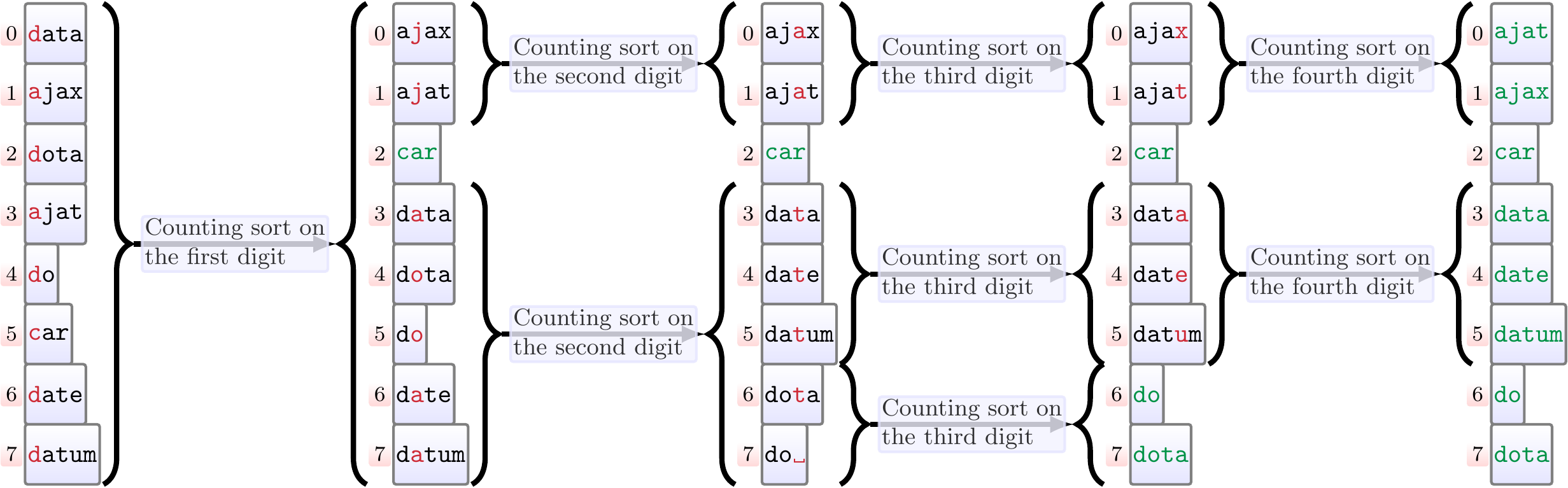

The most-significant-digit-first radix sort

first sorts the elements according to their first (most significant) digit by using counting sort, and

then, recursively, sorts each sub-array that (i) has the same most significant digit and (ii) is of length two or more, by considering sequences starting from the second digit.

Example: Most-significant-digit-first radix sort

The figure below illustrates the recursive calls done when sorting strings. As the sequences may not be of the same length, each sequence can be thought to end with a special symbol ␣ that is the smallest digit (for instance, in the C language strings are usually ending with the byte 0).

Properties:

Stable

Running time is \(\Oh{dnk}\), which is \(\Oh{dn}\) for a constant \(k\), where \(d\) is the maximum length of the sequences

Uses counting sort as a subroutine, which requires \(\Oh{k+n}\) amount of extra memory.

Note: variants of most-significant-digit-first radix sort with different properties exist, please see e.g. Wikipedia.

Least-significant-digit-first radix sort

Again, assume that the elements are sequences of digits from \(0,....,k-1\) and we wish to sort them in lexicographical order. But now also assume that all the elements are of the same length \(d\). For instance, the elements could be

32-bit integers are sequences of length \(4\) of \(8\)-bit bytes, or

ASCII-strings of the same length, such as Finnish personal identity codes.

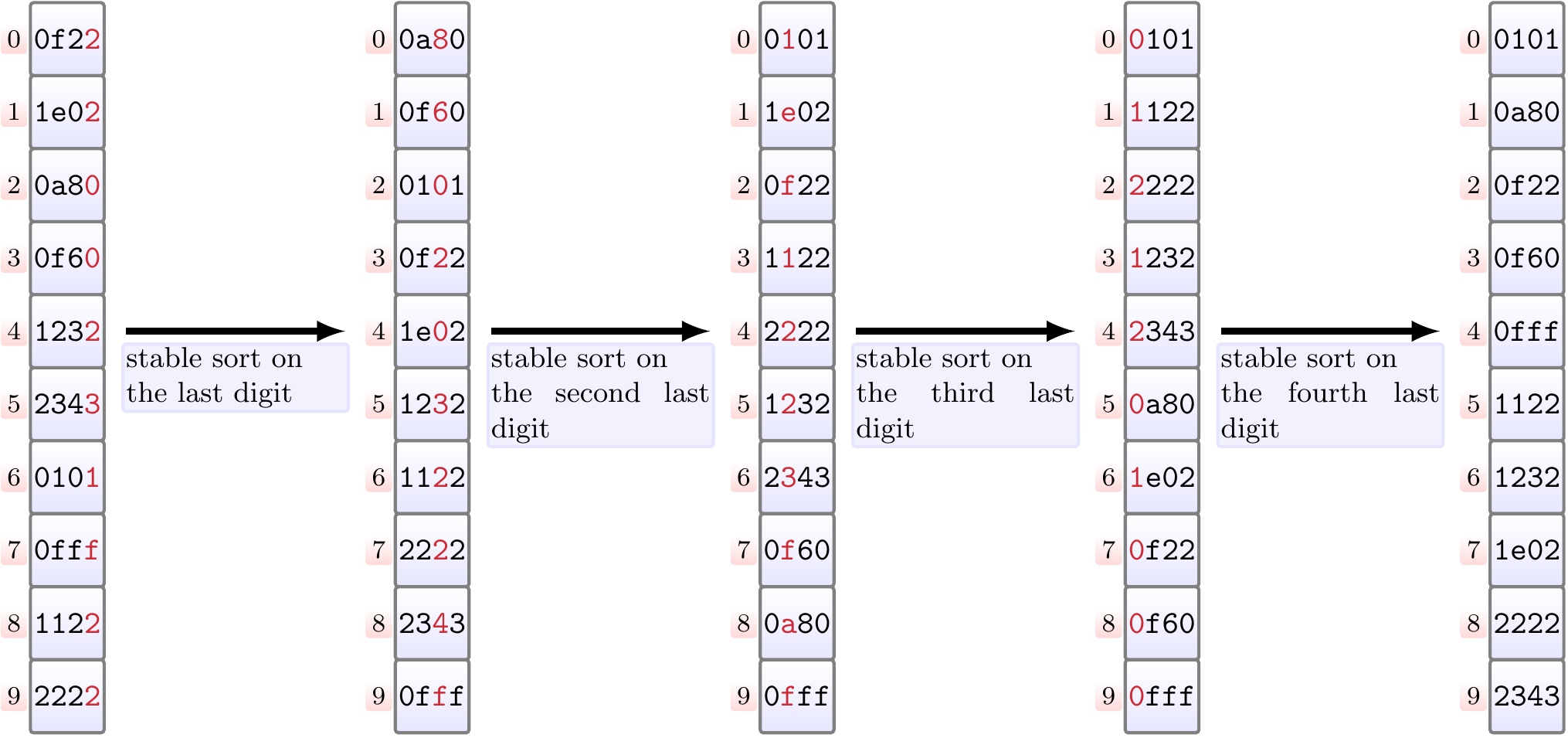

The least-significant-digit-first radix sort

first sorts the elements according to their last (least significant) digit by using the stable counting sort, and

then, iteratively, sorts the elements according to their second-last digit by using the stable counting sort and so on.

Example: Least-significant-digit-first radix sort

Sorting 16-bit unsigned (hexadecimal) numbers by interpreting them as sequences of four 4-bit digits.

Properties of least-significant-digit-first radix sort:

Stable.

Works in time \(\Oh{d(k + n)}\) as, in the worst case, it makes \(d\) calls to counting sort.

As counting sort is used as a subroutine, it does not work “in place” but needs \(\Oh{k + n}\) extra memory.

Implementation and experimental evaluation are left as a programming exercise.

Note that radix sort is used for sorting integers in the CUDA GPU Thrust library, see

Satish, M. Harris and M. Garland: Designing Efficient Sorting Algorithms for Manycore GPUs, Proc. IEEE IDPDS 2009 [access via Aalto lib]